|

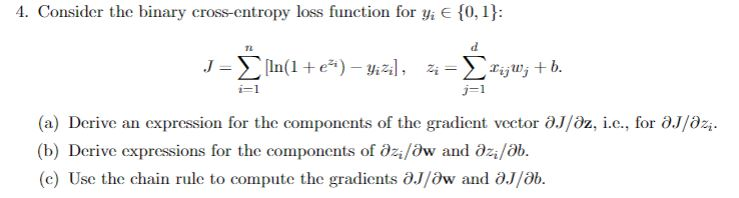

7/2/2023 0 Comments Binary cross entropy loss\[J = \frac\) should all be computed before applying the updates). The Mean-Squared Loss: Probabalistic Interpretationįor a model prediction such as \(h_\theta(x_i) = \theta_0 \theta_1x\) (a simple linear regression in 2 dimensions) where the inputs are a feature vector \(x_i\), the mean-squared error is given by summing across all \(N\) training examples, and for each example, calculating the squared difference from the true label \(y_i\) and the prediction \(h_\theta(x_i)\): Weight of class c is the size of largest class divided by the size of class c. This would need to be weighted I suppose How does that work in practice Yes. It is the first choice when no preference is built from domain knowledge yet. These are the most commonly used functions I’ve seen used in traditional machine learning and deep learning models, so I thought it would be a good idea to figure out the underlying theory behind each one, and when to prefer one over the others. Cross-entropy is the go-to loss function for classification tasks, either balanced or imbalanced. In this post, I’ll discuss three common loss functions: the mean-squared (MSE) loss, cross-entropy loss, and the hinge loss. Binary Cross-Entropy Loss / Log Loss This is the most common loss function used in classification problems. Given a particular model, each loss function has particular properties that make it interesting - for example, the (L2-regularized) hinge loss comes with the maximum-margin property, and the mean-squared error when used in conjunction with linear regression comes with convexity guarantees.

There are several different common loss functions to choose from: the cross-entropy loss, the mean-squared error, the huber loss, and the hinge loss - just to name a few. Loss functions are a key part of any machine learning model: they define an objective against which the performance of your model is measured, and the setting of weight parameters learned by the model is determined by minimizing a chosen loss function.

0 Comments

Leave a Reply. |

RSS Feed

RSS Feed